If you’re asking whether Frase AI visibility tracking helps with GEO in 2026, you’re already ahead of the shallow conversation.

The useful question isn’t “will this make me rank?” It’s: can I measure whether AI engines mention or cite my brand—and can I do anything practical with that signal?

One observed reality: more of the research journey now happens inside AI answers where users never click a blue link. That creates a measurement problem for brands—especially if competitors are being recommended and cited while you’re invisible.

My stance: tracking is only worth paying attention to if you can turn it into a content action loop. Otherwise it’s just a new dashboard to worry about.

What “AI visibility tracking” and “GEO” actually mean

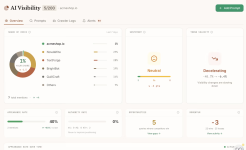

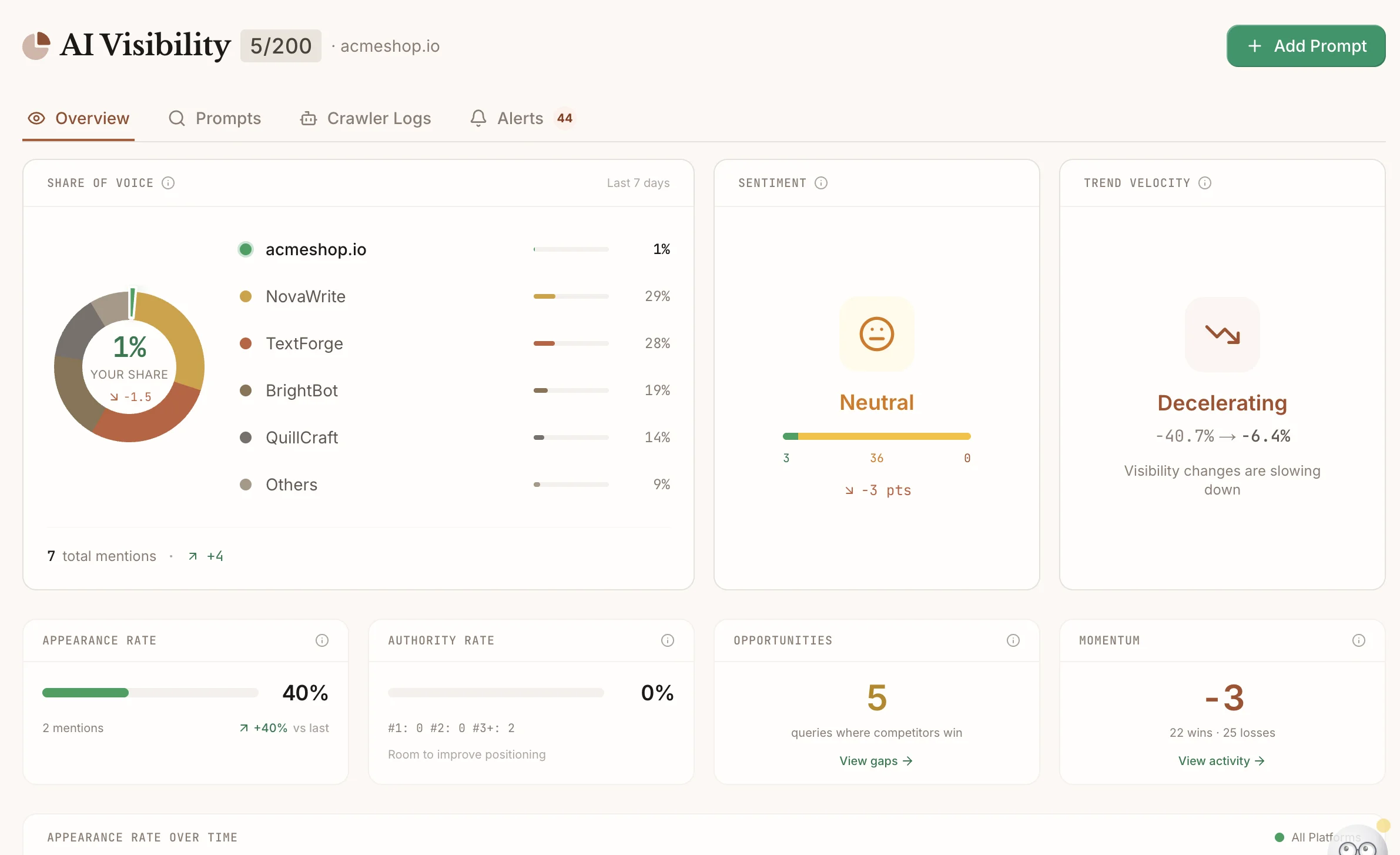

AI visibility tracking is monitoring how often (and where) your brand shows up inside AI-generated answers for a set of prompts that matter to your category—things like “best X,” “X vs Y,” “how to choose X,” and “alternatives to X.”

GEO (Generative Engine Optimization) is the content and site work you do to increase the odds that AI systems cite or recommend you: clearer structure, stronger authority signals, direct answers, and content that’s easy to extract and trust.

The narrative turn most teams need: GEO is not “a new SEO.” It’s closer to making your content easier to cite and harder to ignore—while still doing the fundamentals that make SEO work.

What problem AI visibility tracking solves (and what it doesn’t)

It solves: “We have no idea whether AI engines mention us, cite us, or recommend competitors instead.” That’s a real problem if your category is actively researched in AI tools.

It doesn’t solve: weak positioning, thin content, unclear category fit, or a brand that simply isn’t the best answer for common prompts. Tracking can’t manufacture demand.

Here’s the candid caution: AI visibility data can feel actionable even when it’s mostly noise. If you don’t have a clear set of prompts that tie to real pipeline or revenue, you can end up optimizing for vibes.

Who actually needs this now (and who should ignore it)

You’re a good fit for AI visibility tracking if:

- You sell in a category that gets researched in AI tools (software, services, education, consumer products with comparisons).

- You already have content assets (guides, comparisons, “best of” pages, product explainers) that could realistically be cited.

- You can ship updates (you have editorial bandwidth to act on what you learn).

- You have competitors worth tracking and you want to understand where they’re winning prompts.

It’s probably premature if:

- You don’t publish consistently or you don’t have a content refresh habit.

- Your brand is early and you’re still figuring out product-market fit and positioning.

- You can’t act on the data (no time to write or update pages).

Where Frase fits in a GEO + AI visibility workflow

Frase’s best-case fit is when you want one platform to connect:

- Tracking: prompts, platforms, mentions/citations, competitor gaps

- Action: build or update content with a workflow that supports SEO + GEO structure

- Iteration: re-check performance over time and focus on the prompts that matter

If you want a broader “is this tool worth it” evaluation beyond AI visibility, start with see whether Frase fits your workflow.

A practical setup: prompts first, dashboards second

If you want AI visibility tracking to be useful, start with prompts that map to real buyer intent. I’d usually build a small set like:

- Category prompts: “best [category] tools,” “top [category] software,” “best [category] for small teams”

- Comparison prompts: “[your brand] vs [competitor],” “alternatives to [competitor]”

- Problem prompts: “how to [solve problem],” “how to choose [category]”

- Use-case prompts: “best [category] for [industry],” “best [category] for [role]”

Then ask one hard question: if we improve visibility on these prompts, do we actually win anything? If the answer is unclear, you’re probably not ready for a tracking program yet.

How to act on the data without chasing noise

AI visibility tracking is useful when it triggers specific content moves. In practice, that usually looks like:

- Create a missing page type: if you’re absent on “best” prompts, you may need a serious comparison/roundup page that’s actually helpful.

- Upgrade “citation friendliness”: add clear definitions, direct answers, structured sections, and credible sourcing.

- Refresh an existing asset: if you’re mentioned but not cited, the page may need clearer proof, stronger structure, or better topical completeness.

- Fix thin sections: if AI tools summarize competitors as “more complete,” your content likely has gaps that readers also feel.

Brief quality matters here more than most teams admit. If you want a structured way to turn SERP + prompt insight into a writer-ready plan, use build a better SEO content brief with Frase.

Two short videos to see the workflow in motion

Common mistakes teams make with AI visibility tracking

- Tracking too many prompts: you end up with dashboards and no action.

- Optimizing for mentions instead of relevance: being mentioned in the wrong context doesn’t help.

- Ignoring content maintenance: visibility changes won’t stick if your content decays.

- Confusing “cited” with “trusted”: citations can be unstable; build the underlying content quality.

- Skipping the competitive question: “why are they cited and we aren’t?” is the real path to action.

A softer human verdict: if this feels like a lot, that’s a signal. AI visibility is easiest to adopt when your SEO/content ops are already steady. If you’re still fighting basics, do that first.

Frequently Asked Questions

Do I need AI visibility tracking if my SEO is strong?

Not automatically. If your category isn’t being researched heavily in AI tools (or you can’t act on the insights), tracking may be premature. If AI answers are a real part of your buyers’ journey, it can be worth monitoring—especially for competitive prompts.

Is GEO separate work from SEO?

There’s overlap. Clear structure, direct answers, and strong topical coverage help both. GEO tends to emphasize citability and machine readability—so formatting and proof signals matter more.

What should I measure, realistically?

Keep it simple: whether you appear for important prompts, whether competitors are cited instead, and whether your changes improve appearance/citation trends over time. Don’t build strategy on a single weekly snapshot.

If I don’t choose Frase, what else should I compare?

Compare tools by the workflow role you need (briefing, optimization, tracking). A broader shortlist can help: compare the best SEO content optimization tools in 2026.

Next step

If you think AI visibility tracking is relevant for your category, the most sensible next move is to verify what’s included in the current plans and whether the tracking layer matches your needs (prompts, competitors, reporting, and team access).